In ILC NewsLine last week, Nick Walker wrote a guest Director’s Corner where he outlined the steps to the Technical Design Report (TDR) and Barbara Warmbein wrote a feature article summarising some highlights of the Baseline Technical Review (BTR) meeting at DESY. Today, I write a third article on the same basic subject of how to get from here to there, there being the TDR.

One important decision at the DESY BTR was to retain a flux concentrator in the baseline for the positron source

Our overall programme has had two main thrusts since we completed the ILC reference design in 2007: first, completing the key R&D technical demonstrations that are needed to prove technical viability of the ILC; and second, evolving the ILC design toward one optimised for cost, performance and risk. Today, I address the latter topic, and in particular, the multitude of remaining technical decisions that are needed to completely define the TDR baseline design.

The ILC Reference Design Report (RDR) was completed in 2007. It presents a complete concept for a linear collider that could achieve the physics performance goals set out by the International Linear Collider Steering Committee. The next phase of our work is directed towards producing a Technical Design Report that can be the basis of government approvals and a final engineering design.

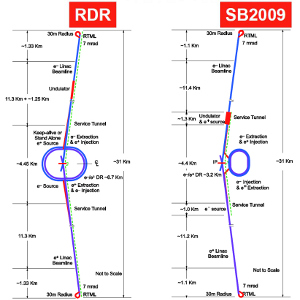

In late 2009, the ILC project managers (Marc Ross, Nick Walker and Akira Yamamoto) made a ‘top-down’ proposal for a set of changes to the RDR baseline design aimed at making significant cost savings for the TDR without markedly reducing performance. The underlying motivation was to find areas to reduce costs in order to compensate inevitable cost growth in other areas. Too many large projects have been plagued by large cost increases following initial design and costing. As a result, we have been strongly motivated to avoid that problem by being particularly diligent about containing cost growth since the RDR. One result has been the significant changes we have made to the baseline design.

Evaluating the proposed major changes to the baseline has been the focus of much of our efforts over the past two years. A process involving workshops, reviews and finally formal decisions by the Global Design Effort (GDE) director resulted in defining the average gradient and support of gradient spread; changing to a single main linac tunnel and working on associated high-level radiofrequency power solutions; reducing the beam power parameter set, including reducing the size of the damping rings; and relocating the positron source to the central campus, including provisions for low-energy running. Following completion of the process of evaluating major changes we have moved on to evaluating the multitude of other final design decisions needed to completely define the baseline for the TDR.

Andy White (University of Texas at Arlington) was one of the ‘stakeholder’ representatives from the ILC detector community at the DESY Baseline Technical Review meeting. Image: GDE

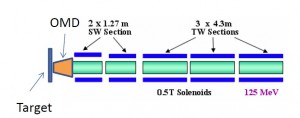

One example of such a decision that was made at the BTR at DESY was to retain a flux concentrator for positrons in the TDR baseline rather than adopt a more conservative quarter-wave transformer magnet. However, the flux concentrator will have a more realistic field of 3.2 teslas (T) compared to the original proposed goal of 4 T. Simulations have indicated that the associated loss of capture efficiency will be only a few percent. In the end, the risks in this approach were deemed acceptable, given the approximate factor of two increase in capture efficiency. This decision also supports continuing the ongoing R&D programme and prototypes tests aimed at demonstrating proof of principle by the end of the Technical Design Phase.

The general process for making the remaining decisions to completely define the TDR baseline is focussed on four Baseline Technical Review meetings. The first of these, in July, dealt with the damping rings. The second of these meetings, held in October at DESY, involved the electron source, positron source, ring-to-main-linac optics, including bunch compressors, the beam delivery system and machine-detector interfaces and finally central region integration. There were many decisions and actions addressed at this meeting.

A pensive project manager, Nick Walker (DESY) watches presentations at the DESY Baseline Technical Review. Image: GDE

As Warmbein’s article points out, one outcome of the DESY BTR meeting was a “decision to opt for a two-stage bunch compressor rather than a single-stage one.” Interestingly, this particular choice represents a success of our process and went against the project managers’ proposal to adopt a single-stage bunch compressor with a compression ratio of 20. Nick Walker, project manager for the European region, commented as follows regarding this decision:

The primary arguments were based on an already-accepted reduction in the damping ring bunch length from 9 mm to 6 mm and an estimated cost saving of about 0.5% of the project costs. However, the participants at DESY felt that the two-stage compressor affords greater flexibility at the interaction point parameters than the single stage, allowing a higher maximum compression ratio of up to 40 (resulting in a bunch length of roughly 0.15 mm), albeit at the cost of a larger uncorrelated energy spread. The reported benefit of additional flexibility in bunch length was considered to outweigh the marginal cost saving associated with the single-stage compressor. With this change, beam parameter sets including the ‘low-bunch charge’ parameter set presented at BAW2 in January 2011 are in principle possible but, importantly, were neither endorsed nor adopted.

The process of evaluating the proposed changes has been a long and rigourous one, and two more Baseline Technical Reviews still remain for early 2012. However, it is clear that the GDE project managers deserve the credit for taking the lead, proposing a specific set of changes and leading the often difficult process of including all stakeholders, convincing review committees and colleagues and deciding on each change or detailed specifications. They have done a terrific job, and now we are in a good position to produce a TDR – a big step toward realising the ILC.

Recent Comments